Every AI agent needs a database it can break

AI agents need two infrastructure layers: real-time streaming for fresh data and database branching for sandboxes. How to build both with Postgres.

By:

Claudiu DascalescuPublished:

Reading time:

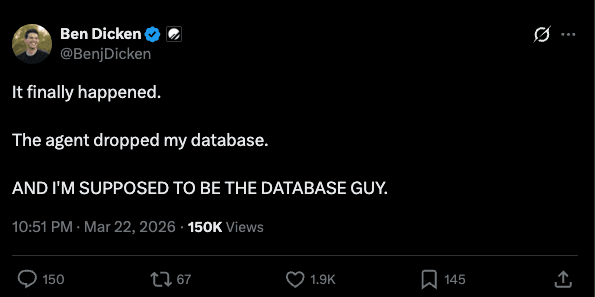

7 min readBen Dicken tweeted that an agent dropped his database.

That’s not a one-off story. It’s what happens when you give autonomous systems real access to production database.

Meanwhile, IBM just paid $11 billion for Confluent. Not for AI models, but for the infrastructure that keeps data fresh, consistent, and flowing in real time. That investment makes a lot of sense: teams building AI are discovering quickly that getting the model to work is one project. Getting the data infrastructure underneath it to hold up in production is a different, much harder one.

But Confluent solves only part of the problem: keeping data fresh.

There’s a second layer that’s just as critical, and much less explored: giving every AI agent a database it can safely break.

Two things your AI needs from the database

Your fraud detection model needs to score transactions in real time. Your recommendation engine needs to personalize based on what the user did 30 seconds ago, not yesterday. Your AI agent needs to query a database, try some mutations and maybe roll them back if the plan doesn't work out.

All of these need two things:

- Fresh data flowing in. Production changes reaching the AI system in real time, not via a nightly batch job.

- Somewhere safe to operate. A database the AI system can actually read from, write to and experiment with, without touching production.

The IBM-Confluent acquisition addresses #1. Real-time streaming. Get the data moving. Kafka, connectors, schema management, the whole pipeline from database to wherever you need the data.

But #1 alone doesn't get you far because once the data is flowing, what do you do with it?

Layer 1: Streaming

Instead of ETL jobs that run overnight, your production database changes propagate to downstream systems continuously.

The Confluent stack for this looks like:

That's a lot of moving parts. Confluent Cloud manages it all for you, or you can self-manage with Confluent Platform (Kafka brokers, Schema Registry, Connect workers, Flink, the full orchestra).

For Postgres teams specifically, there's a simpler option: pgstream. It's an open-source CDC tool that captures Postgres WAL changes and delivers them to Postgres replicas, Elasticsearch, OpenSearch, webhooks or Kafka. All in a single Go binary.

Changes typically reach the target within seconds of being committed to the source, depending on your batch configuration and target write speed.

pgstream also handles automatic DDL replication. When someone runs ALTER TABLE ADD COLUMN on the source, the schema change propagates to all targets automatically. pgstream uses event triggers baked into the Postgres WAL to track DDL changes as first-class events.

By contrast, Confluent's Debezium connector struggles with schema changes. You need Schema Registry, careful versioning and manual coordination to keep things in sync.

Fair comparison: Confluent has 120+ pre-built connectors, Apache Flink for complex stream processing, enterprise governance, and multi-cloud support. pgstream has four targets and a 12MB binary. Different tools for different scales. But if your source is Postgres and your targets are Postgres, Elasticsearch or Kafka, pgstream does the job with 2 processes instead of 6.

Either way, Confluent or pgstream, Layer 1 gets your data flowing. Your AI system can now see what's happening in production, in real time.

Necessary but not sufficient.

Layer 2: Branching (where your agent actually works)

Your AI agent needs to query a database. Not a read replica. A database it can actually write to. Maybe it needs to try an INSERT to test a hypothesis, run a migration or stage data for a multi-step workflow.

Dumping a 1TB database takes hours and costs full storage per copy. Read replicas are read-only, so they're out. And you definitely can't let the agent write to production. So where does it go?

This is the second layer: disposable database copies that don't cost you a full copy each.

Database branching uses copy-on-write storage to fork a live Postgres database into independent, writable copies. Each branch shares unchanged data pages with the source and only stores its own modifications. Instead of duplicating every data page, you share the pages with the source and only store what changes. Same data, independent working copy, throw it away when you're done.

50 branches of a 1TB database don't cost 50TB of storage. They cost 1TB (shared) plus whatever each branch actually changes. If each branch modifies 0.1% of the data, you're looking at ~1.05TB total, not 50TB.

Each copy is real Postgres. Same connection string format, same wire protocol. psql, Prisma, Django ORM, your agent's database library. They all work unchanged. Here's what it looks like:

This is what we've been building at Xata. Branches fork a live database. Your CI pipeline opens a PR, creates a branch, runs migrations and tests against production data, tears it down on merge. An AI agent gets its own branch to operate freely without affecting anything else.

What happens when you plug them together

Say you're building an AI agent platform for e-commerce. Your agents help merchants optimize pricing, forecast inventory and detect anomalies. Each agent needs two things from the database:

Reading fresh data. Agent #42 asks "what were the top-selling items in the last hour?" That query hits an Elasticsearch index that pgstream keeps in sync with production. The data is seconds old, not hours old. The agent gets an accurate picture of what's happening right now.

Writing safely. Agent #42 has a hypothesis: "if we drop the price of SKU-7891 by 12%, we'll clear the excess inventory by Friday." To test this, the agent needs to INSERT a pricing change, run the forecast model against the updated database and see what happens. It does this on its own Xata branch, a full Postgres copy where it can mutate freely. If the forecast looks good, the agent reports the recommendation. If not, the branch gets deleted. Production never knew it happened.

In code, Agent #42's workflow looks roughly like this:

Neither layer alone is sufficient. Without streaming, the agent's search queries return yesterday's data and the pricing recommendation is based on stale inventory. Without branching, the agent has nowhere to run its what-if simulation. You're not going to let it INSERT experimental pricing changes into production.

Here's the combined architecture:

pgstream handles the read path: fresh data, everywhere it needs to be. Xata handles the write path: safe, disposable sandboxes for experimentation. Same source database, two different infrastructure needs.

50 agents, 1TB and the math that breaks pg_dump

Let's say you're running an AI agent platform. 50 concurrent agents, each needing its own database sandbox with production data.

pg_dump/restore | Branching (Xata) | |

|---|---|---|

Storage | 50TB (50 full copies) | ~1.05TB (shared + deltas) |

Compute | 50 always-on Postgres instances | Scale-to-zero; pay only when active |

Monthly cost | $150K-$400K (50 x RDS db.r6g.xlarge at 1TB each) | ~1TB shared storage + scale-to-zero compute. Ballpark 10-20x less depending on workload |

Copy creation | Hours per copy | near instant |

At 200 agents, infrastructure costs rise fast. 200 full copies of a 1TB database is 200TB of storage alone, and someone has to explain that bill to finance. With branching, it's still ~1TB shared plus deltas.

Trade-offs

Database branching is a new category. Streaming has had 10+ years to mature. Kafka launched in 2011. Confluent has been building on it since 2014. Database branching for production workloads is newer, so the tooling, patterns, and best practices are still being established. That said, the underlying technology (copy-on-write at the storage layer) is well understood, and the use cases are clear. The category is new, but the need isn't.

pgstream's scope is intentionally narrow (see the fair comparison above). If your source isn't Postgres or your targets aren't supported, it's not the right tool. That's by design. pgstream trades breadth for simplicity, focusing entirely on getting changes out of Postgres reliably instead of trying to be a general-purpose streaming platform.

IBM's $11B bet says real-time data streaming is now critical enterprise infrastructure. Streaming tooling is mature. Database branching is newer, but every AI agent that touches a database needs a sandbox, and pg_dump isn't getting any faster.

IBM just validated Layer 1. Layer 2 is next.

If you want to try either layer:

- pgstream: github.com/xataio/pgstream.

brew install pgstream, point it at your Postgres, and go. - Xata: xata.io. Connect your existing Postgres, create your first branch, and

psqlinto it.

pgstream is open source (Apache 2.0), built by the Xata team. Xata is a managed Postgres platform with copy-on-write branching.

FAQ

What database infrastructure do AI agents need? Two layers: (1) real-time streaming to keep data fresh via CDC tools like Confluent or pgstream, and (2) database branching to give each agent a writable sandbox with production data that doesn't affect the source.

How does database branching work for AI agents? Database branching uses copy-on-write storage to create writable Postgres copies that share data pages with production. Each agent gets its own branch, can INSERT/UPDATE/DELETE freely, and the branch is deleted when done. Production is never affected.

How much does it cost to give every AI agent its own database? With pg_dump, 50 copies of a 1TB database costs 50TB of storage ($150K-$400K/month on RDS). With database branching, the same 50 copies share storage and cost roughly 1.05TB plus compute only when active -- ballpark 10-20x less.

What is pgstream? pgstream is an open-source change data capture (CDC) tool for Postgres. It reads the Postgres write-ahead log (WAL), captures row-level changes and DDL schema changes, and delivers them to downstream targets: Postgres replicas, Elasticsearch, OpenSearch, webhooks or Kafka. It ships as a single 12MB Go binary.

What is the difference between pgstream and Confluent? Confluent has 120+ connectors, Apache Flink for stream processing and multi-cloud enterprise support. pgstream supports four Postgres-specific targets in a 12MB binary. pgstream handles DDL replication automatically; Confluent requires Schema Registry and manual coordination for schema changes. Different tools for different scales.

Can AI agents safely write to a production database? Not directly. Database branching solves this by giving each agent a writable copy-on-write branch. The agent can INSERT, UPDATE, DELETE, or even DROP TABLE on its branch without affecting production. When done, the branch is deleted.

Experience Xata with $100 free credits

Give every agent its own Postgres branch with real data, instantly, without killing your budget.

Related Posts

Database branching for AI coding agents: a minimal, CLI-first setup that actually works

Learn how to enable database branching for coding agents like Claude Code and Amp Code using simple Xata CLI instructions in AGENTS.md. No complex skills required.

Closing the loop: Building a coding agent that uses Postgres branches

Explore how to build an AI coding agent that follows a full developer workflow, including creating Postgres branches, using a sandbox, fixing bugs, and raising pull requests with Xata, Vercel, and GitHub.

Teaching Claude Code to use isolated database branches with Agent Skills

Learn how to set up the Xata Agent Skill in minutes. Enable Claude Code to access realistic data using isolated database branches for safer, faster debugging.

From DBA to DB Agents

Discover how a PostgreSQL DBA’s decade of expertise evolved into designing Xata’s AI-powered Postgres agent, automating monitoring and observability.