Code Review for Vibe Coders

Product managers and marketers are now submitting code with AI. Here's how to write PRs that engineers actually want to review, approve, and merge.

By:

Richard GillPublished:

Reading time:

8 min readAI coding is enabling product managers, marketers, sales etc. to contribute code directly. But, whilst the coding part is easier than ever - The hard part is convincing the engineering team to merge and ship the code.

I've been on both sides of code review for more years than I'd like to admit. Non-engineers are now submitting AI-generated PRs, and I've been enjoying helping them get merged. This post lays out the advice I give to new coders submitting their first PRs.

But first, the obvious question...

Why do software teams code review?

If you're new to coding you might be thinking: "If the code works, why not just ship it?". Fair question.

The software industry learned the hard way: if you don't take care with code, it gradually becomes a tangled mess. This "tech debt" lets you ship fast today, but makes every subsequent change slower and harder.

Having a second human review the code keeps everyone honest, catches blind spots, and provides wider context to keep things on track.

At its core, a code review is a negotiation between submitter and reviewer - trading off short-term shipping speed against long-term maintainability.

But hasn't AI solved coding?

AI coding isn't (yet?!) perfect. Today, without supervision from an engineer with taste [1], AI cannot produce pull requests of the same quality as an engineer.

[1] Engineering with taste

These limitations also mean AI cannot elevate a new coder to the level of a senior engineer.

Even worse, the probabilistic next-token nature of LLMs means that AI-generated code is in the "uncanny-valley" of looking "about right". This is actually worse than obviously wrong code, because it takes real effort to spot the subtle issues hiding underneath.

Reviewers also have very little signal about how the code was produced. Did a human carefully review and test every line, or just accept some sloppy AI-generated code?

Getting your PRs reviewed and merged quickly

There is really 1 simple rule: Optimize for your reviewers. Make it easy for engineer reviewers to hit approve without them facing negative consequences.

Some common negative consequences for engineer reviewers:

- The PR breaks the system - this is now the engineer's fault / responsibility.

- Code reviews take up engineer's time, but they're supposed to be working on something else.

- The PR messes up the code, adding "tech debt", which causes engineers pain later on.

My advice is to go the extra mile, and show you put in as much human effort as possible. Humans tend to be more willing to put in the effort to get a PR merged if they can see a human on the other side has put in effort.

Pre-AI PR best practices

Since you're a new coder, let's start by covering the ground rules engineers had for code reviews before AI came along:

Keep PRs small

- Measure PR size with:

number of files changedandtotal lines of code changing. - Not all code is equal: auto-generated code or "boilerplate" code (necessary, but high repetition) counts less in the line count than hardcore logic changes.

- If your PR is getting too big, find ways to break it into smaller PRs.

- Smaller PRs are easier to review, lower risk to merge - which makes them easier to ✅.

- If-in-doubt: Favor smaller PRs.

- There is a trade-off here: You shouldn't submit a single change in 20 x 1 line PRs!

- Rule of thumb: I like ~3-7 screen-heights of code diff as a size.

Every PR should be mergeable on its own

- If you never shipped a follow-up PR, the merged code should still be fine on its own.

- More complex changes sometimes need to break this rule, but it's a good default.

Make your PR easy to approve ✅

When you create a PR you should include:

- The "motivation" of the change you're trying to make

- Why are you doing a PR in the first place?!

- How did you test your changes?

- Use preview deploys to test your changes work before they hit production.

<advert>We make a Postgres database that lets you branch for preview deploys.</advert>

- Attach screenshots and videos of what you tested / confirmed to the PR description.

- Was there some non-obvious info you discovered along the way that your reviewer might question?

- If this info is evergreen - add a comment in the code so it lives beyond the PR.

- If the info is temporary put it in the PR description or a GitHub comment in the PR diff.

Self-review your PR before requesting review

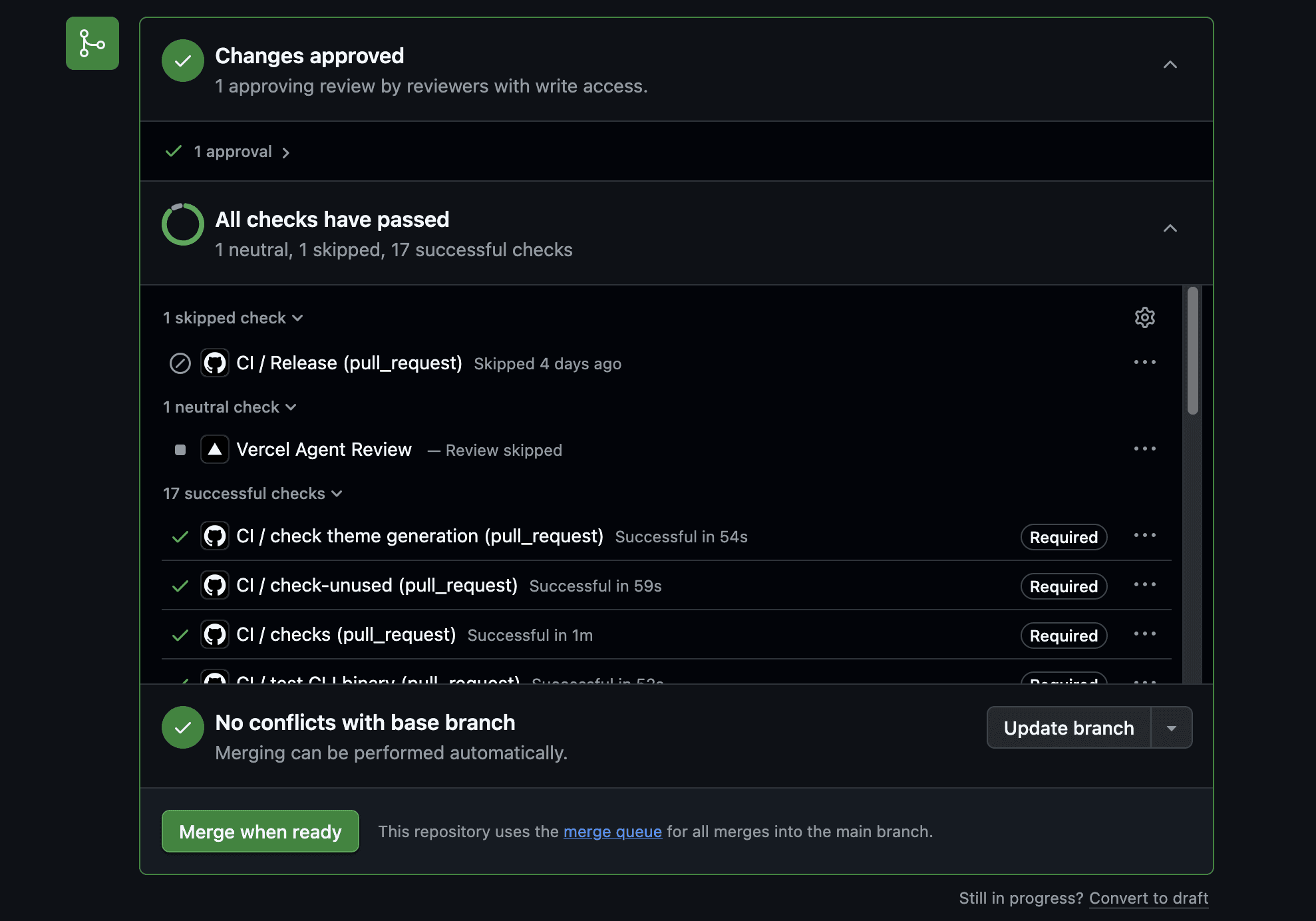

- Make sure all GitHub checks (at bottom of PR) are green before you ask anyone to review your code.

Green GitHub checks

- Review your code on GitHub yourself as best as you can before getting another human to look at it.

- Always do it on GitHub so you see exactly what a reviewer will see.

- Use the "draft" status in GitHub so people know it's not ready for review.

- Re-read the title, description, code & GitHub checks -> fix mistakes -> re-read everything again -> fix mistakes … until you cannot see any more mistakes.

- As an engineer, I spend 30-50% of my effort self-reviewing and "cleaning up" at the end of my PRs.

Be nice

- Overcompensate by being nice, friendly and collaborative.

- There is a natural tension with your reviewer, defuse it with kindness!

- Default to implementing all your reviewer's suggestions. Save pushback for the hills worth dying on.

AI-era PR advice

Disclaimer: Things are moving fast. These are my do's and don'ts for PRs with AI, as of April 2026.

AI is a powerful tool, but it currently has limitations.

You own the output

- Don't trust AI fully, always review its output (the code!).

- You, and you alone, are responsible for its output. You need to own it.

- Try to understand what AI can and can't do.

- Be careful what you outsource to AI.

- Important or high level things still need a human in the loop.

Be transparent about AI usage

AI produces output that looks ~human, but may contain issues. This makes it hard for the reviewer to know what is human-generated and what is AI-generated.

- Provide signal to reviewers of which output received human effort / review.

- You can do this by showing that you "took the care" (we can all spot AI slop!).

- Never present AI slop as your own work.

- If something is AI-generated and not properly human-reviewed, explicitly signal this is the case (sometimes this is fine).

- To reduce the reviewer’s burden, you should human-review as much of the output as possible before submitting it.

- If something is heavily vibe coded or is an experiment, explicitly communicate this is the case and what your expectations are.

- Example 1: "I vibed this just to see if it would work. Not expecting to merge it in this state".

- Example 2: PR Title: "[Experiment] New pricing page with calculator".

- Do this even if you heavily reviewed the content, but it looks like AI (more relevant with words than code).

Write PR titles and descriptions by hand

- Title and description are the highest leverage part of the PR.

- Take the time to write them by hand to show you took the care.

- AI-generated descriptions tend to summarize "what changed", which the diff already shows.

- A good description focuses on the motivation for the PR.

- What does the reviewer need to know that isn't in the code: the why, the trade-offs, anything non-obvious.

Advice for new coders

Since you're new to submitting PRs, you're relying on AI to close the gap to get the code for your PR done. You won't (yet!) have "good taste" about the code and will be missing context. This means that even if you put in all the effort in the world, the probability you will get the code into a mergeable state is worse than an engineer on the team.

Choose tasks where AI can follow existing code patterns

- Changes where a strong precedent has already been set in the code tend to go better.

- AI can just "fit in" with pre-existing code patterns.

- Examples: A new option, a small tweak, a new website page nearly identical to an existing one.

- Ask the AI: "Is there a similar pattern where this code base does [similar thing]?".

Large PRs can backfire

Larger functionality changes generate a lot of code. If the changes begin going wrong, they can place a heavy burden on the reviewer.

- In some cases, helping fix a busted PR is more work than the reviewer just doing it from scratch. Because cleaning up the code ends up being more work than just doing it right the first time.

- If the reviewer needs to put in significant work to help get the PR merged now, the PR is circumventing any kind of prioritization process in the team and effectively jumps the queue. The PR becomes more like a GitHub "issue" of: "hey we need to do this thing".

- For these reasons it needs to be OK for reviewers to fully reject large complex PRs and prioritize them using the normal process.

Ask for direction early

If you realize things are going wrong, or you're very out of your depth: Ask upfront.

Example: "Hey I'm trying to do [confusing thing X]. Any idea how I should tackle this?". Spend time on this message to make it very low effort to consume.

- This allows the reviewer to nudge things in the right direction earlier, and shifts more of the work back to you and away from them.

- This is also a natural point to say: "This is too big / difficult a change / we can't tackle this right now" - this is an OK outcome.

- Asking early gets buy-in from engineers because they have some agency that this is happening / not happening.

PRs "anchor" the "negotiation-to-merge" towards: "merge". It's unpleasant to have to reject PRs and not help people get their work merged, especially if they've put effort into it.

A PR is a very direct way of initiating a piece of work (you're literally doing the work), use your judgement to decide if it's more productive to ask questions before firing off a PR.

Keep upskilling

- Level up yourself along the way based on review feedback.

- Take detours to improve your code.

If you get better at coding it's a win-win-win!

Welcome to the software industry

Having so many new coders join the software industry at once is exciting! But inevitably, it's going to cause some growing pains.

I know that us engineers can seem a bit intimidating. But if you understand why we do code reviews and put in the effort to meet us in the middle - we're actually a pretty friendly bunch!

See you on some PRs soon.

Whispers chanting: "One of us, One of us..."

Appendix: PR jargon

- LGTM -> "Looks good to me"

- Nit -> "I'm nitpicking, this change is optional, but I would be happy if you did change it"

- WIP -> "Work-in-progress" not yet ready to be merged

- Raise a PR -> Create a PR

Experience Xata with $100 free credits

Give every agent its own Postgres branch with real data, instantly, without killing your budget.